- Offer Profile

-

The Computational Learning and Motor Control Lab has its research focus in the areas of neural computation for sensorimotor control and learning. Neural Computation attempts to combine knowledge from biology with knowledge from physics and engineering in order to develop a more fundamental and formal understanding of information processing in complex systems.

Content Overview

- Research in the CLMC-Lab encompasses a broad spectrum, ranging from applied to theoretical research and from research with robotic systems to research on biological topics, including experiments with human subjects and topics in computational neuroscience. The following list gives examples of the research foci of the recent years:

Statistical Learning

- Scalable Statistical Learning for Robotics

We are interested in supervised learning methods that accomplish nonlinear coordinate transformations and achieve robust internal models for our autonomous high-dimensional anthropomorphic systems.

Our focus is on the development of new learning algorithms for complex movement systems, where learning may proceed in an incremental fashion (i.e. sequential availability of data points). Using Bayesian approaches and graphical models, we aim to create algorithms that are fast, robust and based on a solid statistical foundation, yet scalable to extremely high dimensions.

Recent work has included the Bayesian Backfitting Relevance Vector Machine and a related variant (applied to EMG activity prediction). Both produce computationally efficient solutions and offer properties such as feature detection and automatic relevance determination. An augmented version of the algorithm's graphical model gives a Bayesian Factor Analysis regression model that performs noise cleanup. It offers significant improvements in generalization performance, as has been demonstrated in parameter identification tasks for our robotic platforms.

Reinforcement Learning...

- Scalable Statistical Learning for Robotics

We are interested in supervised learning methods that accomplish nonlinear coordinate transformations and achieve robust internal models for our autonomous high-dimensional anthropomorphic systems.

Our focus is on the development of new learning algorithms for complex movement systems, where learning may proceed in an incremental fashion (i.e. sequential availability of data points). Using Bayesian approaches and graphical models, we aim to create algorithms that are fast, robust and based on a solid statistical foundation, yet scalable to extremely high dimensions.

Recent work has included the Bayesian Backfitting Relevance Vector Machine and a related variant (applied to EMG activity prediction). Both produce computationally efficient solutions and offer properties such as feature detection and automatic relevance determination. An augmented version of the algorithm's graphical model gives a Bayesian Factor Analysis regression model that performs noise cleanup. It offers significant improvements in generalization performance, as has been demonstrated in parameter identification tasks for our robotic platforms. ..for Robotics and Computational Motor Control

- While supervised statistical learning techniques have

significant applications for model and imitation learning, they do not

suffice for all motor learning problems, particularly when no expert teacher

or idealized desired behavior is available. Thus, both robotics and the

understanding of human motor control require reward (or cost) related

self-improvement. The developement of efficient reinforcement learning

methods is therefore essential for the success of learning in motor control.

However, reinforcement learning in high-dimensional spaces such as manipulator and humanoid robotics is extremely difficult as a complete exploration of the underlying state-action spaces is impossible and few existing techniques scale into this domain.

Nevertheless, it is obvious that humans also never need such an extensive exploration in order to learn new motor skills and instead rely upon a combination of both watching a teacher and subsequent self-improvement. In more technical terms: first, a control policy is obtained by imitation and then improved using reinforcement learning. It is essential that only local policy search techniques, e.g., policy gradient methods, are applied as a rapid change to the policy would result into a complete unlearning of the policy and might also result into an unstable control policies which can damage the robot.

In order to bring reinforcement learning to robotics and computational motor control, we have developed a variety of novel reinforcement learning algorithms, such as the Natural Actor-Critic and the Episodic Natural Actor-Critic. These methods are particularly well-suited for policies based upon motor primitives and are being applied to motor skill learning in humanoid robotics and legged locomotion.

Imitation Learning

- Scalable Statistical Learning for Robotics

We are interested in supervised learning methods that accomplish nonlinear coordinate transformations and achieve robust internal models for our autonomous high-dimensional anthropomorphic systems.

Our focus is on the development of new learning algorithms for complex movement systems, where learning may proceed in an incremental fashion (i.e. sequential availability of data points). Using Bayesian approaches and graphical models, we aim to create algorithms that are fast, robust and based on a solid statistical foundation, yet scalable to extremely high dimensions.

Recent work has included the Bayesian Backfitting Relevance Vector Machine and a related variant (applied to EMG activity prediction). Both produce computationally efficient solutions and offer properties such as feature detection and automatic relevance determination. An augmented version of the algorithm's graphical model gives a Bayesian Factor Analysis regression model that performs noise cleanup. It offers significant improvements in generalization performance, as has been demonstrated in parameter identification tasks for our robotic platforms. - Humans and many animals do not just learn a task by trial

and error. Rather they extract knowledge about how to approach a problem

from watching other people performing a similar task. From the viewpoint of

computational motor control, learning from demonstration is a highly complex

problem that requires to map a perceived action that is given in an external

(world) coordinate frame into a totally different internal frame of

reference to activate motoneurons and subsequently muscles. Recent work in

behavioral neuroscience has shown that there are specialized neurons

("mirror neurons") in the frontal cortex of primates that seem to be the

interface between perceived movement and generated movement, i.e., these

neurons fire very selectively when a particular movement is shown to the

primate, but also when the primate itself executes the movement. Imaging

studies with humans confirmed the validity of these results. Research on

learning from demonstration offers a tremendous potential for future

autonomous robots, but also for medical and clinical research. If we can

start teaching machines by showing, our interaction with machines would

become much more natural. If a machine can understand human movement, it can

also be used in rehabilitation as a personal trainer that watches a patient

and provides specific new excercises how to improve a diminished motor

skill. Finally, the insights into biological motor control developed in

learning from demonstration can help to build adaptive prosthetic devices

that can be taught to improve the performance of a prosthesis.

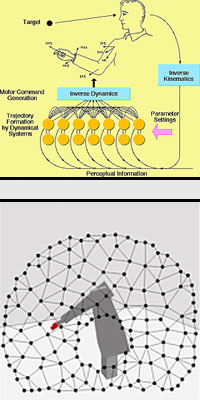

In several projects, we have started to study learning from demonstration from a the view point of learning theory. Our working hypothesis is that a perceived movement is mapped onto a finite set of movement primitives that compete for perceived action. Such a process can be formulated in the framework of competitive learning. Each movement primitive predicts the outcome of a perceived movement and tries to adjust its parameters to achieve an even better prediction, until a winner is determined. In preliminary studies with anthropomorphic robots we have demonstrated the feasibility of our approaches. Nevertheless, many open problems remain for future research. Collaborators of our laboratory in Japan also try to develop theories on how the cerebellum could be involved in learning movement primitives. In our future research we will employ the humanoid robot above to study learning from demonstration in a man-humanoid environment.

- Humans and many animals do not just learn a task by trial

and error. Rather they extract knowledge about how to approach a problem

from watching other people performing a similar task. From the viewpoint of

computational motor control, learning from demonstration is a highly complex

problem that requires to map a perceived action that is given in an external

(world) coordinate frame into a totally different internal frame of

reference to activate motoneurons and subsequently muscles. Recent work in

behavioral neuroscience has shown that there are specialized neurons

("mirror neurons") in the frontal cortex of primates that seem to be the

interface between perceived movement and generated movement, i.e., these

neurons fire very selectively when a particular movement is shown to the

primate, but also when the primate itself executes the movement. Imaging

studies with humans confirmed the validity of these results. Research on

learning from demonstration offers a tremendous potential for future

autonomous robots, but also for medical and clinical research. If we can

start teaching machines by showing, our interaction with machines would

become much more natural. If a machine can understand human movement, it can

also be used in rehabilitation as a personal trainer that watches a patient

and provides specific new excercises how to improve a diminished motor

skill. Finally, the insights into biological motor control developed in

learning from demonstration can help to build adaptive prosthetic devices

that can be taught to improve the performance of a prosthesis.

Motor Primitives

- Scalable Statistical Learning for Robotics

We are interested in supervised learning methods that accomplish nonlinear coordinate transformations and achieve robust internal models for our autonomous high-dimensional anthropomorphic systems.

Our focus is on the development of new learning algorithms for complex movement systems, where learning may proceed in an incremental fashion (i.e. sequential availability of data points). Using Bayesian approaches and graphical models, we aim to create algorithms that are fast, robust and based on a solid statistical foundation, yet scalable to extremely high dimensions.

Recent work has included the Bayesian Backfitting Relevance Vector Machine and a related variant (applied to EMG activity prediction). Both produce computationally efficient solutions and offer properties such as feature detection and automatic relevance determination. An augmented version of the algorithm's graphical model gives a Bayesian Factor Analysis regression model that performs noise cleanup. It offers significant improvements in generalization performance, as has been demonstrated in parameter identification tasks for our robotic platforms. - Movement coordination requires some form of planning:

every degree-of-freedom (DOF) needs to be supplied with appropriate motor

commands at every moment in time. The commands must be chosen such that they

accomplish the desired task, but also such that they do not violate the

abilities of the movement system. Due to the numerous DOFs in complex

movement systems and the almost infinite number of possibilities to use the

DOFs over time, there exist actually an infinite number of possible movement

plans for any given task.This redundancy is advantageous as it allows a

movement system to avoid situations where, for instance, the range of motion

of DOFs is saturated, or where obstacles need to be circumvented to reach a

goal. But, from a learning point of view, it also makes it quite complicated

to find good movement plans since the state spaces spanned by all possible

plans it extremely large. What is needed to make learning tractable in such

high dimensional systems is some form of additional constraints, constraints

that reduce the state spaces in a reasonable way without eliminating good

solutions.

The classical way to constrain solution spaces is to impose optimization criteria on the movement planning, for instance, by requiring that the system accomplishes the task in minimum time or with minimal energy expenditure. However, it is not trivial to find the correct cost function that result in an adequate behavior. Thus, our research on trajecotry planning has been focussing on an alternative method of constraining movement planning by requiring that movements are built from movement primitives. We conceive of movement primitives as simple dynamical systems that can generate either discrete or rhythmic movements about every DOF. Only speed and amplitude parameters are initally needed to get a movement started. Learning is required to fine-tune certain additional parameters to improve the movement. This approach allows us to learn movements by just adjusting a relatively small set of parameters. We are currently exploring how these dynamical systems can be used to generate full body movement, how their parameters can be learned with novel reinforcement learning methods, and how such movement primitives can be sequenced and superimposed to accomplish more complex movement tasks. We also consider how our developed models compare to biological behavior to find out which movement primitives biological systems employ, and how such movement primitives are represented in the brain.

Inspiration from biology also motivates a related trajectory planning project that we conduct. A common feature in the brain is to employ topographic maps as basic represenation of sensory signals. Such maps can be built with various neural network approaches, for instance Kohonen's Self-Organizing Maps or the Topology Representing Network (TRN) by Martinetz. From a statistical point of view, topographic maps can be thought of as neural networks that perform probability density estimation with additional knowledge about neighborhood relations. Density estimators are very powerful tools to perform mappings between different coordinate systems, to perform sensory integration, and to serve as basic representation for other learning systems. But in addition to these properties, topographic maps can also peform spatial computations that can generate trajectory plans. For instance, by using diffusion-based path planning algorithms, we demonstrated the feasability of such an approach by learning obstacle avoidance with a pneumatic robot arm. Learning motor control with topographic maps is also highly interesting from a biological point of view, as, in contrast to visual information processing, the usefulness of topographic maps in motor control is far from understood so far.

- Movement coordination requires some form of planning:

every degree-of-freedom (DOF) needs to be supplied with appropriate motor

commands at every moment in time. The commands must be chosen such that they

accomplish the desired task, but also such that they do not violate the

abilities of the movement system. Due to the numerous DOFs in complex

movement systems and the almost infinite number of possibilities to use the

DOFs over time, there exist actually an infinite number of possible movement

plans for any given task.This redundancy is advantageous as it allows a

movement system to avoid situations where, for instance, the range of motion

of DOFs is saturated, or where obstacles need to be circumvented to reach a

goal. But, from a learning point of view, it also makes it quite complicated

to find good movement plans since the state spaces spanned by all possible

plans it extremely large. What is needed to make learning tractable in such

high dimensional systems is some form of additional constraints, constraints

that reduce the state spaces in a reasonable way without eliminating good

solutions.

Nonlinear Control

- To date, most approaches to the control of nonlinear systems such as humanoid robots are highly dependent on handcrafted high gains and/or precise rigid body dynamics models. However, in order to ever leave laboratory floors, humanoid robots will require low-gain control so that they cannot damage their environment and due to the large extent of unmodeled nonlinearities the learning of the dynamics model will become essential. Over the last decades, we have developed several new approaches to nonlinear control and will illustrate some of these in more detail at this point.

Operational Space Control

- Many difficult robot systems as well as other plants defy

any attempt of modeling from a physical understanding. If high-gain control

is impossible due to the application, compliance requirements or the usage

of light-weight low-torque motors, then learning is often the only choice.

In our lab, we have developed a variety of learning and adaptive control

methods. Most of these techniques learn extremely fast and outperform human

modeling by far in tested robot applications.

Inspired by results from analytical dynamics, we have introduced a novel control architecture together with our collaborator Firdaus Udwadia (Department of Aerospace and Mechanical Engineering). This architecture allows the derivation both of novel as well as established control laws (e.g., operational space control laws) from a unique immediate cost optimal control perspective. We are currently working on a generalization which will allow the framework to become a learning framework.

We use nonlinear control techniques to address the issue of task achievement in operational space while maintaining coordination among redundant degrees of freedom: a particularly challenging problem for highly redundant robots, like humanoids. In addition to examining both traditional and novel redundancy resolution schemes on our 7-DOF manipulator, we are investigating operational space control techniques as a means of center-of-gravity placement for balancing legged platforms.

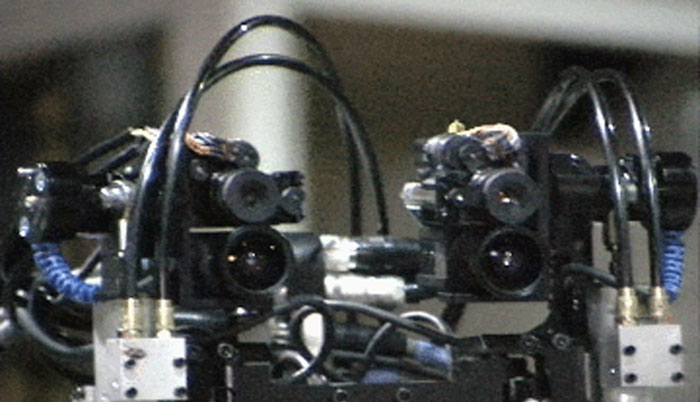

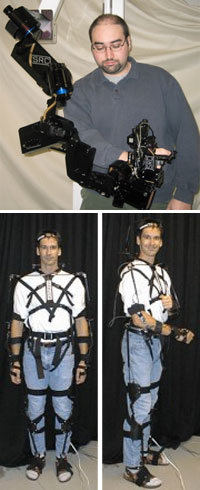

Humanoid Robotics

- We are investigating computational and biological theories of perceptuo-motor control on real humanoid robots. These robots include anthropomorphic arms, oculomotor systems, and even full body humanoid robots. A seleciton of pictures of our full body humanoid is shown in the following pictures.

Legged Locomotion

- We are investigating computational and biological theories of perceptuo-motor control on real humanoid robots. These robots include anthropomorphic arms, oculomotor systems, and even full body humanoid robots. A seleciton of pictures of our full body humanoid is shown in the following pictures.

- Legged locomotion is one of the most important but also

one of the hardest control problems in

humanoid robotics and none of the current approaches completely solves

it to date. As it is obvious from studies of humans and animals, learning

plays a significant role in both the balance stabilization and gait

generation of biological legged creatures. It is therefore both an important

application as well as an essential problem for learning control.

Our approaches to learning control for locomotion are highly intertwined with both our work in learning and control in the other research on this website. Previously developed learning and control techniques provide us with a unique framework and allow us to create novel approaches to locomotion. For example, motor primitives for gaits and foot placement can become essential tools. Such motor primitives can be learned using a mixture of imitation learning and reinforcement learning. Their execution as well as the balancing of the robot are hard nonlinear control problems which can be solved by few control laws including learning control laws developed in our lab.

We study locomotion mainly using two systems, i.e., the humanoid robot Computational Brain CB and the quadruped robot Little Dog. The humanoid robot CB is one of the most advanced humanoid robots and is driven using hydraulic actuators. Developed by SARCOS Inc., it is located at our collaborator's facility at ATR, Kyoto, Japan and shown in the the picture above. The quadruped robot Little Dog is special platform for learning locomotion developed by Boston Dynamics Inc. One Little Dog is located at the University of Southern California in Los Angeles, CA and shown at the right. The Little Dog project has started in Fall 2005 and is part of the DARPA Learning Locomotion program.

- Legged locomotion is one of the most important but also

one of the hardest control problems in

humanoid robotics and none of the current approaches completely solves

it to date. As it is obvious from studies of humans and animals, learning

plays a significant role in both the balance stabilization and gait

generation of biological legged creatures. It is therefore both an important

application as well as an essential problem for learning control.

Computational Neuroscience

- We are investigating computational and biological theories of perceptuo-motor control on real humanoid robots. These robots include anthropomorphic arms, oculomotor systems, and even full body humanoid robots. A seleciton of pictures of our full body humanoid is shown in the following pictures.

Discrete-Rhythmic Movement Interactions

- Force field experiments have been a popular technique

used for determining the mechanisms underlying motor planning, execution,

and learning in the human motor system. In these experiments, robotic

manipulanda apply controlled, extraneous forces/torques either at the hand

or individual joints while the subjects carry out movement tasks, such as

point-to-point reaching movements or continuous patterns. However, because

of the mechanical constraints of the manipulanda used, these experiments

have been limited to 2-DOF movements, focusing on shoulder and elbow joints,

and thus not allowing for any spatial redundancy in a movement. By utilizing

a 7-DOF exoskeleton, our experimental platform allows us to explore a wider

variety of movements, including tasks in full 3-D space with the major 7-DOF

of the human arm, and because of the inherent redundancy in these movements

we can specifically examine issues such as inverse kinematics and redundancy

resolution in human arm control.

In double-step target-displacement protocol we investigate how an unexpected upcoming new target modifies ongoing discrete movements. Interesting observations in literature are: the initial direction of the movement, the spatial path of the movement to the second target, and the amplification of the speed in the second movement. Experimental data show that the above properties are influenced by the movement reaction time and the interstimulus interval between the onset of the first and second target. In the present study, we use DMPs to reproduce in simulation a large number of target-switching experimental data from the literature and to show that online correction and the observed target switching phenomena can be accomplished by changing the goal state of an on-going DMP, without the need to switch to different movement primitives or to re-plan the movement.

We investigate the interaction of discrete and rhythmic movements in single and two joint experiments. In previous studies of single-joint movement tasks two measures of interaction were identified: 1) Initiation of a discrete movement superimposed to an on-going rhythmic movement is constrained to a particular phase window and 2) The ongoing rhythmic movement is disrupted during the discrete initiation, i.e. phase resetting. The goal of our research is to determince whether the interactions happens at a higher cerebral (i.e., planning) or a lower muscular/spinal (i.e. execution) level. In our psychophysical experiments we use Sensuit to record joint angle position while performing rhythmic and discrete tasks. We are using a simplified spinal cord model to study the effect of co-occurance of the discrete and rhythmic movements in a single joint.