Navigation : EXPO21XX > AUTOMATION 21XX >

H05: Universities and Research in Robotics

> University of Genoa

University of Genoa

Videos

Loading the player ...

- Offer Profile

- LIRA-Lab (Laboratorio Integrato di

Robotica Avanzata) Laboratory for Integrated Advanced Robotics operates in

the Department of Communication, Computer and Systems Science (DIST) of the

University of Genova.

The main research theme is artificial vision and sensory-motor coordination from a computational neuroscience perspective.

Product Portfolio

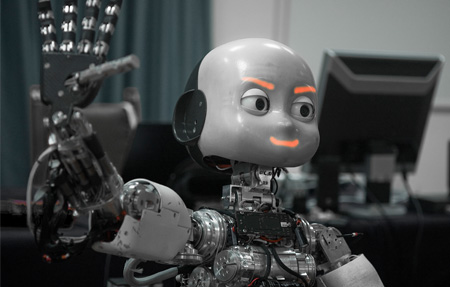

RobotCub

-

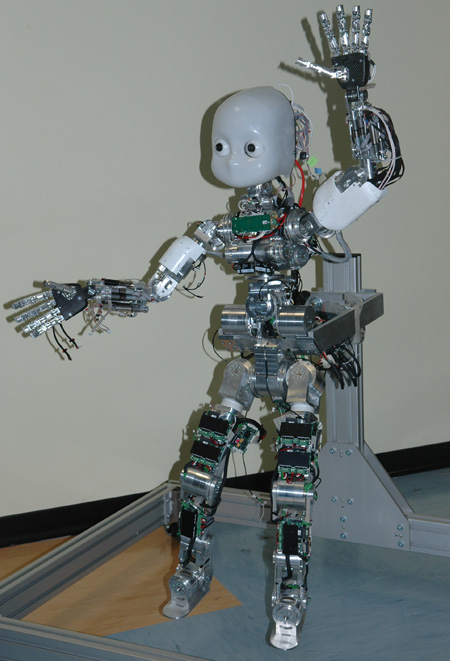

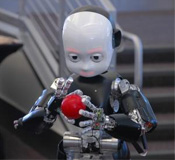

RobotCub is a 5 years long

project funded by the European Commission through Unit E5 "Cognitive

Systems, Interaction & Robotics". Our main goal is to study

cognition through the implementation of a humanoid robot the size of

a 3.5 year old child: the iCub. This is an open project in many

different ways: we distribute the platform openly, we develop

software open-source, and we are open to including new partners and

form collaboration worldwide.

RobotCub is a “success story” which led the consortium through 4 years of continuous and intense collaboration among 10 partners

with background ranging from neurophysiology to engineering in the development of innovative technology (every component of the iCub has been specifi cally designed or customised) and cutting-edge science.

Our technology is distributed openly following a GPL license. There is an important component devoted to the support of the open nature of the iCub by establishing an international research and training facility in Genoa at the Italian Institute of Technology. In addition to updating the iCub design, it will maintain at least three complete iCubs to allow scientists from around the world to use it for experimental research before committing to building their own iCub. The research and training facility will also provide a programme of training courses for scientists and students on building, using, and developing the iCub cognitive capabilities.

To help researchers get their own copy of the iCub, the RobotCub project has launched an Open Call. Six successful proposers have been awarded with a complete iCub “kit” free of charge. These robots will be available to research centers in Europe. Additional robots will be built as part of other IST FP7 projects and we are negotiating several requests also from US and Japan. The iCub middleware and, in general, some of its technology is now used worldwide even outside the original domain of humanoid robotics. A lively community of users is actively contributing to the first complete open source humanoid design.

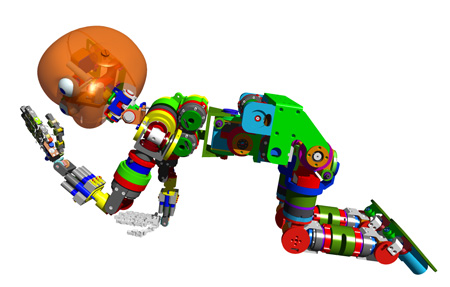

Who am I?

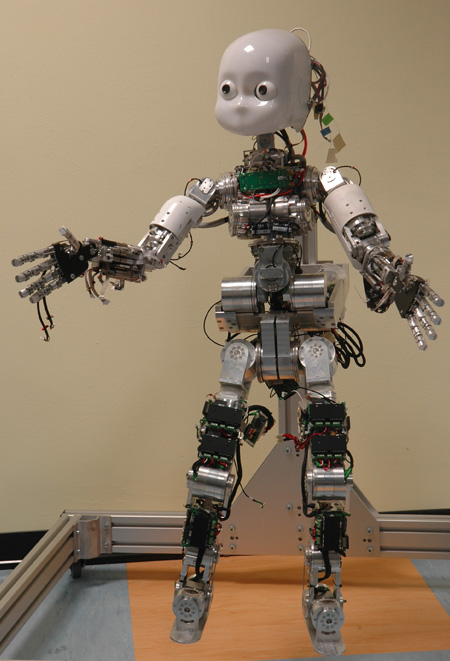

I am a humanoid robot, my name is iCub.I am able to crawl on all fours and sit up. My hands have many joints and I am learning to manipulate objects very skillfully (like a man-cub). My head and eyes are fully articulated and I can direct my attention to things that I like. I can also listen with my ears and feel with my fi ngertips and I have a sense of balance. At the moment I can do simple things but my human friends are teaching me and my brothers something new every day (we are becoming an international family!).

-

RobotCub is a 5 years long

project funded by the European Commission through Unit E5 "Cognitive

Systems, Interaction & Robotics". Our main goal is to study

cognition through the implementation of a humanoid robot the size of

a 3.5 year old child: the iCub. This is an open project in many

different ways: we distribute the platform openly, we develop

software open-source, and we are open to including new partners and

form collaboration worldwide.

The Babybot project

-

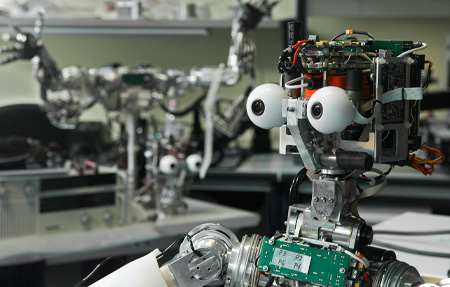

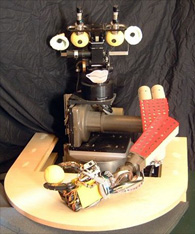

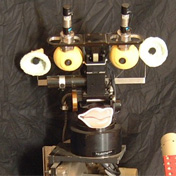

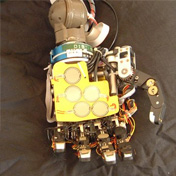

The Babybot is the LIRA-Lab humanoid robot. The

latest version has eighteen degrees of freedom distributed along the head,

arm, torso, and hand. The head and hand were custom designed at the lab. The

arm is an off-the-shelf small PUMA manipulator and it is mounted on a

rotating torso. The Babybot's sensory system is composed of a pair of

cameras with space-variant resolution, two microphones each mounted inside

an external ear, a set of three gyroscopes mimicking the human vestibular

system, positional encoders at each joint, a torque/forse sensor at the

wrist and tactile sensors at the fingertips and the palm.

The one you see in the picture above is the latest realization of the Babybot, a project started in 1996 at LIRA-Lab. The hardware itself went through many revisions so there's not much remaining of the mechanics of the first Babybot beside the PUMA arm.

Our scientific goal is that of uncovering the mechanisms of the functioning of the brain by building physical models of the neural control and cognitive structures. In our intendment physical model are embodied artificial systems that freely interact in a not too unconstrained environment. Also, our approach derives from studies of human sensorimotor and cognitive development with the aim of investigating if a developmental approach to building intelligent systems may offer new insight on aspects of human behavior and new tools for the implementation of complex, artificial systems.

Examples of the behaviors we implemented include (but not only) the control of eye movements such as vergence, saccades, and vestibulo-ocular reflex. We've been working on the integration of different sensory modalities as for example vestibular and visual cues, or acoustic perception with vision. We implemented reaching behavior as a means to physically interact with the external environment to discover about the properties of objects.

-

The Babybot is the LIRA-Lab humanoid robot. The

latest version has eighteen degrees of freedom distributed along the head,

arm, torso, and hand. The head and hand were custom designed at the lab. The

arm is an off-the-shelf small PUMA manipulator and it is mounted on a

rotating torso. The Babybot's sensory system is composed of a pair of

cameras with space-variant resolution, two microphones each mounted inside

an external ear, a set of three gyroscopes mimicking the human vestibular

system, positional encoders at each joint, a torque/forse sensor at the

wrist and tactile sensors at the fingertips and the palm.

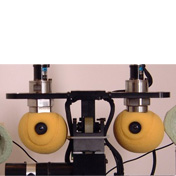

The Head

The Eyes

The Ears

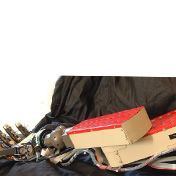

The Arm

The Hand

The Space-variant CMOS Retina

-

This sensor has been realized within SVAVISCA, a project funded by

the European Union under the Long Term Research initiative of

ESPRIT.

Image Based Interactive Device for Effective coMmunication (IBIDEM)

Objectives

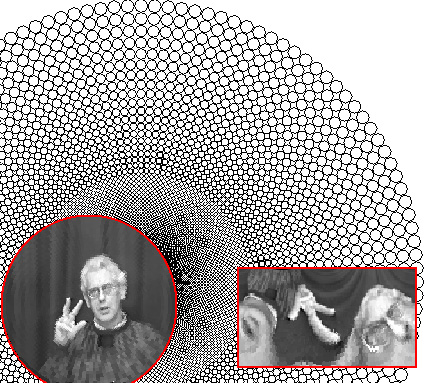

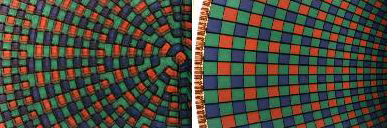

The main objective of IBIDEM is to develop a videophone useful for lip reading by hearing-impaired people as well as provide capabilities for remote monitoring based on a novel type of space-variant visual sensor and using standard telephone lines. Long-distance communication for both social and practical purposes is becoming an increasingly important factor in every-day life. Hearing-impairment does, however, prevent many people from using normal voice telephones for obviuos reasons. A solution to this problem for the hearing-impaired is the use of videophones. Currently available videophones working on standard telephone lines (PSTN) do, however, not meet the dynamic requirements necessary for lip reading. The spatial resolution is also too small. In order to facilitate lip reading, signing, and finger spelling, IBIDEM will develop a videophone based on a novel type of visual sensor matching the resolution of the human retina in both the spatial and temporal domains (a retina-like or space-variant sensor). Members of the IBIDEM consortium already hold a patent in Europe and the US for a prototype of such an imaging device. The geometry of the visual sensor, similarly to the human retina, has a high resolution in the central part and a degrading resolution in the peripheral visual field, as shown in the figure. This solution results in a reduction of the number of pixels of the acquired image (allowing a higher transmission rate on standard telephone lines) without degrading the perceptual appearance of the image, as can be seen from figure.

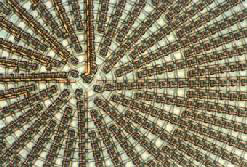

The figure shows in the background the layout of a space-variant sensor having a resolution of 128 photoreceptor on each of the 64 eccentricity; the total amount of pixel is then 8192. The circular image represents the output of such a sensor during "finger spelling" (this is the image that will be shown) while the rectangular image on the right shows (enlarged) the log-polar representation of the image (this is the image that is being received/transmitted).

A second objective of IBIDEM is the use of the same equipment for remote monitoring of health status. The system can be used for obtaining information about the status of a client in the form of images and could be extended to include various physiological parameters like heart rate, blood pressure etc. The IBIDEM project will construct a videophone using a camera with the retinal sensor, a motorized system for moving the point of view of the camera as well as a LCD to display the transmitted images. This videophone will be a high-quality, low-cost aid for both the hearing-impaired as well as being useful for remote monitoring. The videophone will be designed with active participation of members of the deaf and hard-of-hearing community, and will be demonstrated by inter-personal communication between two speakers one or both with hearing disabilities as well as in a situation with remotely monitoring health conditions using audio and visual information.

The color version of the chip was obtained by microdeposition of filters over the monochromatic layout. Different layouts were tested first by simulation and then through physical implementation. On the right the best pattern of the microfilters is shown.

-

This sensor has been realized within SVAVISCA, a project funded by

the European Union under the Long Term Research initiative of

ESPRIT.

image acquired with the SVAVISCA sensor.

The pixel's layout is the same described for the IBIDEM retina and is composed of 8,013 pixels foveal arrangement

A picture of the surface of the sensor is shown for the foveal (left) and the peripheral (right) part of the sensor.