- Offer Profile

- The Centre for Robotics and

Intelligent Systems is part of the School of Computing, Communications and

Electronics of the University of Plymouth. The centre houses a

multidisciplinary group with interests in cognitive systems and robotics and

their constituent technologies. The group has strong national and

international links with both industry and other research institutes.

Fabric Manipulation by Personal Robots

- Aim: The overall aim is the development of robot

skills required for the manipulation of fabrics in an unstructured

environment, e.g. home or laundry.

This project focuses on sorting tasks, such as those conducted before placing cloth in a washing machine.

The objective is the development of artificial vision and manipulation algorithms enabling a small humanoid robot to conduct fabric sorting tasks.Method: The project starts with the observation of humans performing a cloth sorting task. (Figure 1A and 1B).

The observations were analysed (figure 2A) and formed the basis for a computational model of human visual search and grasp (Fig 2B).

This model is now being implemented.Findings: Of special interest are following facts:

- The eyes saccade from grasping point to grasping point. There is no evidence of overt scanning of the visual scene for the selection of the next grasping point (figure 2A)

- Saccades targets reflect covert visual search and strategic planning processes, constrained for instance by the availability of the right or left hand for the grasping task and the motion of the hand after grasping.Contact: Peter Gibbons, Phil Culverhouse, Guido Bugmann

Link: www.tech.plym.ac.uk/soc/staff/guidbugm/FabricManipulation/fabric.html

Fig 1. Setup for human observation

- A. The subject is placed in front of a pile of cloth to be sorted by colour or size. B. An eye tracker is used to follow the gaze direction of the subject.

Fig 2

- A. Classification of eye and hand movements during a sorting task. B. Computational model of visual search and grasp based on figure 2A.

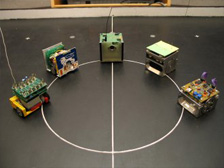

Robot football: multi-robot team competing in the world most popular sport

- With the ever-increasing numbers of robots in the real

world, in particular in industrial environments,

it is increasing important to build teams of robots capable of high level co-operation in real-time situations.

The complexity involved in multi-robot autonomous systems requires some form of model situation to

experiment and develop the inherent technologies. Robot football is clearly an intelligent game that brings together many engineering disciplines into a coherant whole.

(vision, mechanics, electronics, robotics A.I etc….)

The idea of FIRA Robot Soccer originated in 1995, with the first two competitions

(Mirosot '96 & Mirosot'97), being held in KAIST, Korea.

At the University of Plymouth we are continually developing a robot football team that currently

partakes in competitions governed by FIRA.(Federation of International Robot soccer Association).

FIRA Cup competitions bring together skilled researchers and students from different disciplines to play

the game of robot soccer. There are many categories involving different size robots, pitches, and various

levels of robot autonomy, which compete in different soccer tournaments.- Humanoid Robots (HUROSOT)

- Single Humanoid Robot (S-HUROSOT)

- Micro Robots (MIROSOT 5a side & 11a side)

- Nano Robots (NAROSOT)

- Single Nano Robot (S_NAROSOT)

- Khepera Robots (KHEPERASOT)

- Khepera Robot (S-KHEPERASOT)

The University of Plymouth has taken part in the 5-a-side MIROSOT league for many years. It is now focusing on humanoid robot league: Hurosot in which it represents England in International competitions.

Contact: Guido Bugmann

Project page: http://www.tech.plym.ac.uk/robofoot/

IBL: Instruction-based learning. Teaching a robot how to go to the post office

- This project explores a still-untapped method of

knowledge acquisition and learning by intelligent systems: the acquisition

of knowledge from Natural Language (NL) instruction. This is very effective

in human learning and will be essential for adapting future intelligent

systems to the needs of naive users. The aim of the project is to

investigate real-world Instruction Based-Learning (IBL) in a generic route

instruction task. Users will engage in a dialogue with a mobile robot

equipped with artificial vision, in order to teach it how to navigate a

simplified maze-like environment. This experimental set-up will limit

perceptual and control problems and also reduce the complexity of NL

processing. The research will focus on the problem of how NL instructions

can be used by an intelligent embodied agent to build a hierarchy of complex

functions based on a limited set of low-level perceptual, motor and

cognitive functions. We will investigate how the internal representations

required for robot sensing and navigation can support a usable speech-based

interface. Given the use of artificial vision and voice input, such a system

can contribute to assisting visually impaired people and wheelchair users.

Partners: University of Plymouth, University of Edinburgh.

Contact: Guido Bugmann

Project page: http://www.tech.plym.ac.uk/soc/staff/guidbugm/ibl/index.html

Miniature robot (base 8cm x 8cm)

View from the on-board camera

MIBL: Multimodal IBL. Teaching a personal robot how to play a card game

- Aim: The overall aim is the development of human-robot

interfaces allowing the instruction of robots by untrained users, using

communication methods natural to humans.

This project focuses on card game instructions, in a scenario where a user of a personal robot wishes to play a new card game with the robot, and needs to first explain the rules of the game. Game instructions are a good example of more general instructions to a personal robot, due to the range of instruction type they contain: sequences of actions to perform and rules to apply.

The objective is developing a robot-student able to understand the instruction from the humans teacher and integrate them in a way that supports a game playing behaviour.

Method: The project starts with recordings of a corpus of instructions between a human teacher and a human student.

Starting a robot development project with recording users is an approach termed "corpus-based robotics"Contact: Guido Bugmann, Joerg Wolf

Link: www.tech.plym.ac.uk/soc/staff/guidbugm/mibl/index.html

- Setup for corpus collection. The teacher communicates with the student (on the left) by using spoken instructions and gestures mediated by the touch screen.

Natural Object Categorization: Recognizing species of plankton

- The aim of our research is to investigate visual

object recognition in experts and apply that knowledge to machine

recognition. In particular we are interested in expert perception of natural

objects and scene rather than 'novice' or normal perception. Expert

perception is characterised by a period of training, which is required to

ensure that perceptions meet the criteria for expert behaviour. Projects

include expert plankton categorisation and cytological smear slide

assessment.

The work also extends our knowledge of visual perception in general.

Since 1989 the Natural Object Categorisation group at Plymouth University have been developing machine vision systems to categorise marine plankton. The group have focussed on the difficult task of discriminating microplankton, as it is a good model for investigating top-down influences of expert judgements on bottom-up processes. It has been particularly revealing to explore the issues of recognition in a target group of objects where natural morphological variation within species causes experts difficulties. An operational machine (known as DiCANN) has been constructed which has been extensively tested in the laboratory with field-collected specimens of a wide range of plankton species, from fish larvae and mesozooplankton to the dinoflagellates of the microplankton. DiCANN employs multi-resolution processing, ‘what and where’ coarse channel analysis with support vector machine categorisation.

The HAB Buoy project concluded with the construction of four Harmful Algal Bloom monitoring systems that have been deployed to partner sites in Italy, Spain and Ireland. The systems possess digital microscopes and DiCANN recognition software. Using a precision pumped water system, they sample 375ml per hour and image to 1 micron resolution. The DiCANN software is capable of recognising specimens that are greater than 20 micron, both phytoplankton and zooplankton, for monitoring purposes.

Projects include expert plankton categorisation, motion analysis, texture processing and cytological smear slide assessment.

Research topics:- Visual Natural Object Categorisation

- Motion segmentation

- Assessing visual performance of experts

- Machine vision (MTutor on-line learning system) (Problem solving and expert-novice differences)

Contact: Phil Culverhouse

Link: newlyn.cis.plym.ac.uk/cis/

Slothbot: The slowest big robot in the world (probably)

- Slothbot is an idea of Mike Phillips realized with the

help of the Robot Club of the University of Plymouth.

It is probably the slowest big robot in the world.

Slothbot locates itself by interrogating wirelessly the data page of the Arch-OS system that informs on various aspects of the state of the Portland Square Building, including the position of a slow robot in atrium B.Contact: Mike Phillips

Link: Robot Club

Slothbot