- Offer Profile

- Designing the 21st Century

The goal of the Artificial Intelligence Laboratory is to foster intelligence in all its facets by promoting excellence in basic research, education, and society at large. With our activities we hope to contribute – in small ways – to making the world a better place in the 21st century.

To maximize impact, we organize lab tours and seminars for companies, teachers, and their students on a frequent basis, as well as Brown Bag lectures for a wide audience.

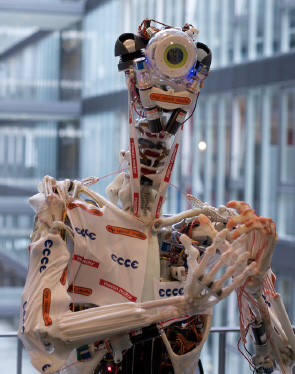

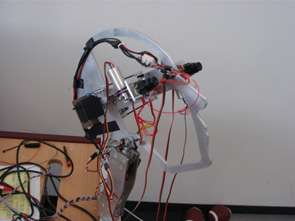

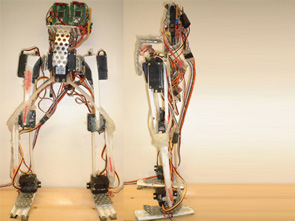

ECCEROBOT-2

- ECCCEROBOT-2, also referred to als EDS (Embodied Design Study) or simply "Max" due to the numerous maxon motors employed has been publicly presented at the Hannover Messe 2010. Have a first glance at EDS here: Photographer credits: Patrick Knab

ECCEROBOT, the home of the first anthropomimetic robot!!!

- Behavioral Subsystem

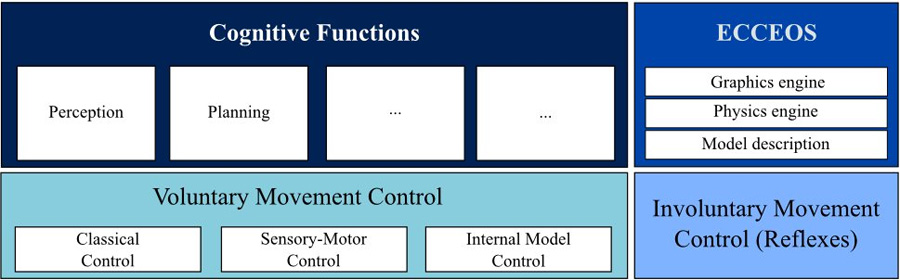

The behavioral subsystem consists of (1) a voluntary movement control unit, (2) an involuntary movement control unit, (3) ECCEOS — the physics based simulation of the robot, and (4) a cognitive functions unit (see following figure). The individual units will be briefly described in the following sections.Voluntary movement control unit

Partners:

For voluntary movement control, three different control approaches are developed and integrated into a single hybrid architecture so that the best suitable control strategy can be selected for different tasks. These three control strategies are: (1) classical control, (2) sensory-motor control, and (3) internal model control.

Involuntary movement control unit (reflexes)

Even though reflexes are not voluntarily controlled they are part of the global behavior. In general, a reflex requires the actuation of more than one muscle (i.e. agonist and antagonist) and hence, the reflex control system can not be implemented on the single actuator level. One reflex that is planned to be implemented is the vestibulo-ocular reflex. Another group of reflexes that might be important in the course of the project are withdrawal reflexes that could be triggered, for instance, in the case of accidental touch.

ECCEOS

ECCEOS, the physics-based computer model of the robot, serves multiple purposes. It is used as a demonstration and development platform for all controllers as well as an internal model for motion planning and for error monitoring during execution. The three main components of the simulator are: (1) a physical and graphical model description, (2) a physics engine (required for collision detection, simulation of the actuator dynamics, etc.) and (3) a graphics engine (for visual feedback and interaction with the simulated scene).

Cognitive functions unit

The cognitive functions unit is what shapes the behavior of the robot. It consists of a perception unit, planning unit, decision making unit, etc.

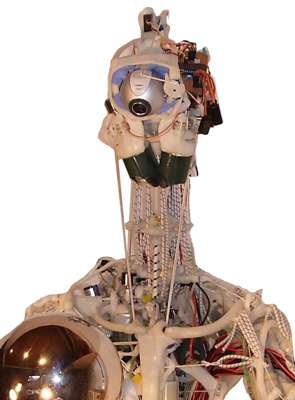

ECCCEROBOT

ECCCEROBOT

ECCCEROBOT

Skeleton

- ECCEROBOTs skeleton is very detailed replicate of the human model,

consisting of bones and joints formed out of polymorph.

Polymorph

The thermoplastic polymorph (a caprolactone polymer) is polythene-like in many ways, but when heated to only 60°C it fuses (or softens, when already fused) and can be freely hand moulded for quite some time. It has a distinctly bone-like appearance when cold and can be reheated and remoulded as many times as necessary.

In practical engineering termes, Polymorph is tough and springy. Its tensile strength is good - Polymorph has the highest tensile strength of all the capralactones, at 580 kg/cm2. It can be further strengthened (and stiffened, if necessary) by adding other materials, such as wire, or metal rods and bars

Actuator subsystem

- The actuator subsystem consists of the individual approx.

80 actuators (one for each muscle). Each actuator, in turn, consists of a

screw driver motor, a gearbox, a spindle, a piece of kiteline as tendon and

an elastic component represented by shock cord

Actuator working principle

The actuator working principle is very simple: by winding the kiteline around the spindle the “muscle” can be either “innvervated” or “relaxed”, depending on the direction of rotation of the motor (see following figure).

Sensor subsystem

- The sensor subsystem consists of a proprioceptive,

visual, audio & vibration, inertial, and tactile unit which will all be

briefly explained in the following sections

Proprioception unit

Proprioception, the sense of the relative position of neighbouring parts of the body, is fundamental for well-controlled movements and interactions with the environment.

Visual unit

Similar to the human model, the robot will be equipped with two “eyes” represented by two high-speed, high-definition cameras.

Audio & Vibration unit

To make, for instance, voice commands possible, the robot will be equipped with an audio system consisting of two microphones that mimic the acoustical and directional characteristics of the human ears. In practice, however, vibration and impact sensing may be more important which is why MEMS accelerometers will be placed in strategic locations around the body.

Inertial unit

Efficieny of the image processing of the visual unit strongly depends on the stability of the perceived images. Imagine a human that shakes his head while reading a book. Due to the vestibulo-ocular reflex he would still be able to read the text. If he, however, would move the book with the same speed, he would not longer be able to read the text as visual processing (which is much slower than vestibular processing) would be the only way to compensate for the movement. Hence, an inertial measurement unit will be included in the head of the robot to simplify image processing by enabling an equivalent to the vestibulo-ocular reflex.

Tactile unit

For manipulation and grasping tasks, sensory feedback from tactile sensors is indispensable. Hence, force-sensitive-resistors and/or matrices will be placed in the fingertips and in the palms of the robot’s hand.

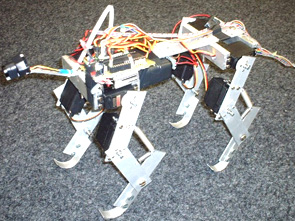

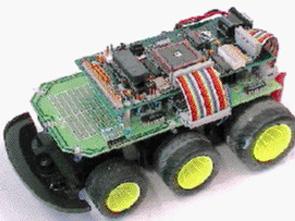

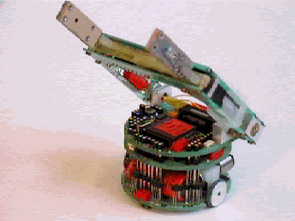

mini-rHex

- We have built a miniature and cheap version of the rHex

robot, originally created by a group of universities under a large DARPA

program in the US.

Our mini-rHex is 26 x 14 cm in size and weighs 530 grams. The whole robot costs USD 190.- to produce. In order to increase speed, each leg has 3 evenly spaced pedals. We have also added a passive spine joint on the body of the robot, between the middle and hindlegs. We will shortly conduct an experiment to evaluate the advantages and disadvantages of this spine joint for handling rough terrains.

Preliminary runs demonstrate that the robot is capable of handling various terrain materials and going over obstacles as high as 110% of its own height (see video). On flat surfaces, the robot can go at about two body lengthes per second with the current configuration, in theory, faster speeds should be possible.

This project is funded by the Locomorph project and supervised by Lijin Aryananda.

- We have built a miniature and cheap version of the rHex

robot, originally created by a group of universities under a large DARPA

program in the US.

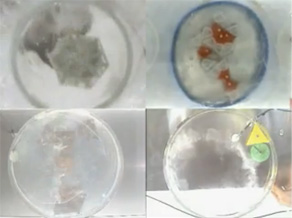

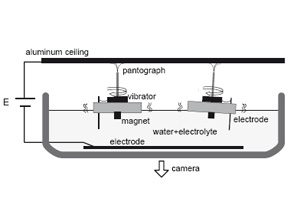

Tribolon(s)

- When we take a look at micro-world of a cell, we see vast

number of molecules interacting each other and somehow manage high

autonomous life activity.

There is no central "control", but they self-assemble and - that's how (and why) you are reading this sentence.

In order to solve the mystery of life (and create "living" robot), we developed a research plat from - Tribolon (derived from Tribology) - which is the only one self-propelled assembly robot in the world.

- When we take a look at micro-world of a cell, we see vast

number of molecules interacting each other and somehow manage high

autonomous life activity.

Robots

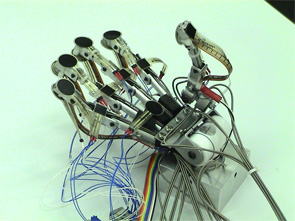

Yokoi Robot Hand I, II, III

- by Gabriel Gomez, Alejandro Hernandez Arieta, Hiroshi

Yokoi, and Peter Eggenberger Hotz

Producted by Tsukasa Kiko EngineeringThe tendon driven robot hand is partly built from elastic, flexible and deformable materials. For example, the tendons are elastic, the fingertips are deformable and between the fingers there is also deformable material. It has 15 degrees of freedom that are driven by 13 servomotors, a bending sensor is placed on each finger as a measure of the position, and a set of standard FSR pressure sensors cover the hand (e.g., on the fingertips, on the back and on the palm).

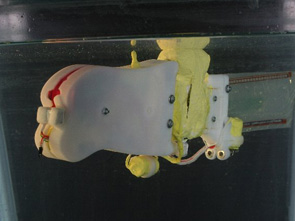

WANDA

- by Marc Ziegler

Diversity of animal's morphology is particularly impressive in the underwater world. It has been uncovered that various properties of morphology have been optimized for the efficient locomotion in the evolutionary process. In this project we explore such morphological properties for the purpose of underwater robot locomotion. Toward adaptive underwater locomotion, this project investigates a fish-like swimming robot. By using motor control with only one degree of freedom, this robot exhibits surprisingly rich behavioral diversity in three dimensional underwater environment.

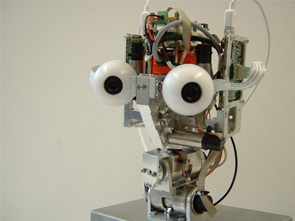

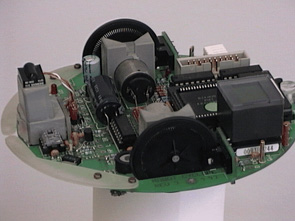

iCub (head)

- Developed withing the European Research project

IST-004370 RobotCub Maintained and run at the AILab by Jonas Ruesch.

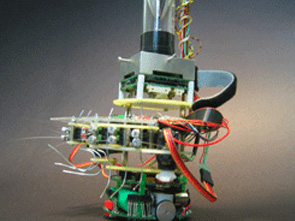

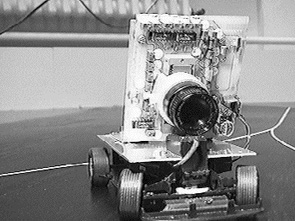

Robot Vision

- by Raja Dravid, Martin F. Krafft, Gabriel Gomez and Jonas

Ruesch

The main objective to build this robot is to study the process of building a coherent representation of visual, auditory, haptic sensations and how this representation can be used to describe/elicit the sense of presence. The goal is the understanding of representation in humans and machines. We intend to pursue this in the framework of development e.g. by studying the problem from the point of view of a developing system. Within this framework we will use two methodologies: on one side we will investigate the mechanisms used by the brain to learn and build this unified representation by studying and performing experiments with human infants; on the other side we intend to use artificial systems (e.g. robots) as models and demonstrators of perception-action representation theories.

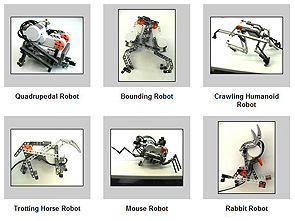

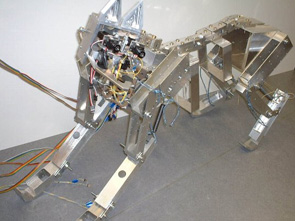

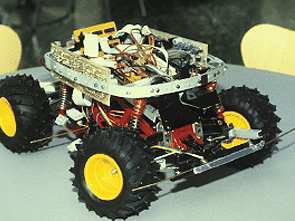

MiniDog I, II, III, IV

- by Fumiya Iida

To achieve rapid locomotion, exploiting morphological properties is essential. The running quadruped robot "MiniDog" is capable of relatively robust rapid legged locomotion by using intrinsic body dynamics induced by spring-like property, weight distribution, and body dimensions. Owing to the use of body dynamics, the control of the robot is extremely simple and, moreover, it has rich behavioral diversity.

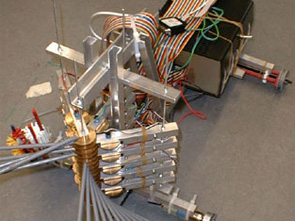

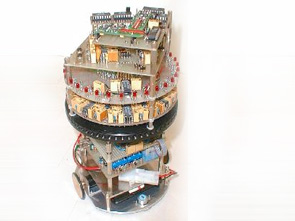

Tribolon

- by Shuhei Miyashita

When we take a look at micro-world of a cell, we see vast number of molecules interacting together and somehow manage high autonomous life activity.

There is no central "control", but they self-assemble and - that's how (and why) you are reading this sentence.

In order to solve the mystery of life (and create "living" robot), we developed a research plat from - Tribolon (derived from Tribology) - which is the only one self-propelled assembly robot in the world.

Check out the detail at www.tribolon.com. You can also download some publications.

Pneumatic robot

- by Raja Dravid

This arm robot consists of actuators using highly non-linear pneumatic artificial muscles. For more detail, please ask the developer Raja Dravid.

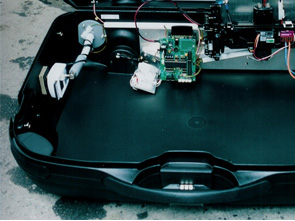

RoboSuitcase

- by Andreas Fischer

The robot started as a remote controlled car (RC-car) with an in some ways special body. The body is a hard-shell suitcase with an only slightly modi ed RC-car base built into it. The RC-car is mounted in a way that the motor can power the rear wheels, while the steering-servo is connected to one of the front wheels. The suitcase can be switched from microcontroller control to usual remote control. Therefore a receiver is built into the suitcase which can be activated through a switch. This has been built in for demonstration purposes and is of no further use in this assignment.

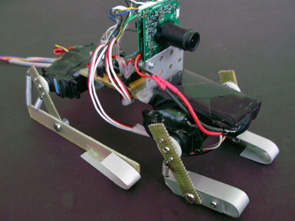

Artificial Mouse

- by Simon Bovet and Miriam Fend

We have developed an artificial whisker sensor based on microphones. Natural rat whiskers are glued onto capacitor microphones such that deformations of the whisker move the membrane of the microphone. This signal can be amplified and digitiliazed. AMOUSE aimed at the construction of a mobile robot equipped with an artificial whisker system that serves as a mean for validating models based on the results from neurophysiological experiments and neural modelling. The AMouse is standard Khepera II robot equipped with two artificial whisker arrays. The whiskers consist of natural rat whiskers glued on capacitor microphones. Each whisker is thus a single sensor. The whiskers can be moved actively. Data acquisition is done on a laptop with a PCMCIA data acquisition card. Furthermore, the robot has an omnidirectional camera allowing experiments on tactile perception, multimodal issues and visual navigation.

Swimming Humanoid

- by Marc Ziegler and Lijin Aryananda

Through many experiments of this swimming humanoid robot, we have noticed that humans are restricted in many ways to swim. For example, we have to take breath when we are swimming which is not the case in the robot. Also a lot of aspect about human system have been revealed. More detail description will come soon.

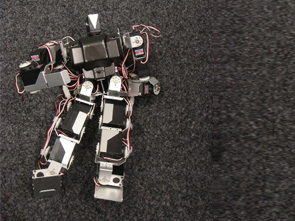

Kondo Humanoid

- Used by Pabro Ventura

Producted by Kondo Kagaku CO.LTDCurrently we have 3 Kondo humanoids purchased from Japanese company. An choreography artist Pabro Ventura have been using this robot for next surprise at an exhibition!

Crazy Bird

- by Mike Rinderknecht and Maik Hadorn

"Cheap" Quadrupedal Locomotion (AI Lab, University of Zurich, Switzerland) Body dynamics can reduce significantly both the computational effort and the complexity of an agentfs controller. In this work, we show that the phase delay between the legs of a quadrupedal agent as a unique controlling parameter is adequate to navigate on a 2D-surface.

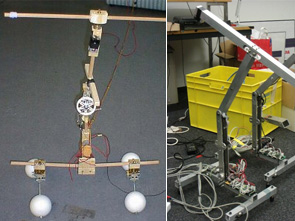

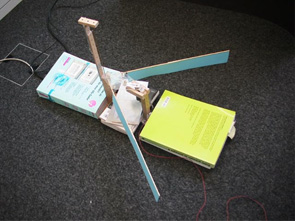

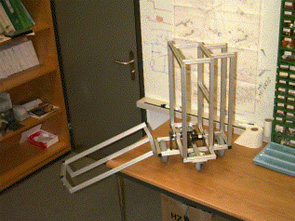

Whirling Arm

- by Lukas Lichtensteiger

The "Whirling Arm" will be used at the Artificial Intelligence Lab as an experimental tool for research on insect vision. It can be seen as a kind of "flight-simulator for insect eyes": An artificial insect eye (camera or specially constructed compound eye) is mounted on the Whirling Arm and is then subjected to fast and complex movements through space that can (to some degree) mimic the actual situation encountered by the head of a flying insect. One goal of these studies is to better understand how the specific features of insect eyes (e.g., its sensor morphology) relate to the visual input the animal encounters during its flight and how this can facilitate flight control. Since insects like the house-fly can navigate very fast the Whirling Arm has to be able to produce very fast reactions. Consequently it was designed for a minimum of inertia for each of its three rotational degrees of freedom while at the same time providing enough motor power for fast accelerations.

Stumpy I, II, III, IV

- by Raja Dravid

The Stumpy Project explores the fundamental design principles of locomotion on the basis of our biological knowledge. However, we do not simply copy the design of the biological systems, but we try to extract the underlying principles. One of the most fundamental challenges in this project is how to enhance the behavioral diversity of a robot by concerving the simplicity of the morphological and physiological design. Given this perspective, in this project, we are investigating the interplay between the oscillation based actuation, the material properties, and the interaction with the environment. Stumpy uses inversed pendulum dynamics to induce bipedal hopping gaits. Its mechanical structure consists of a rigid inverted T-shape mounted on four compliant feet. An upright "T" structure is connected to this by a rotary joint. The horizontal beam of the upright "T" is connected to the vertical beam by a second rotary joint. Using this two degrees of freedom mechanical structure, with a simple oscillatory contro, the robot is able to perform many different behavior controls for the purpose of locomotion including the gait controls of hopping, walking and running.

mini stumpy

- by Fumiya Iida

Many types of small version of stumpy was built by Fumiya Iida. Although the size of the robot significantly affects the whole dynamics of the robot, we have been showing the stability as a morphology and the mechanism relating to the dynamics.

Rabbit I,II

- by Arthur Korn and Fumiya Iida

The robot rabbit was built under the same concept of stumpy. It can move "forward" by jumping with two rotating mass. Also robustness against different types of ground with different frictions was observed.

Dumbo

- by Fumiya Iida and Hiroshi Yokoi

Dumbo is one of the outstanding robot that defis the common wisdom. For more detail, please visit our lab!

Geoff

- by Fumiya Iida

The main objective of this project is to explore the design principles of biologically inspired legged running robots. In particular this project focuses on a minimalistic model of rapid locomotion of quadruped robots inspired by biomechanics studies. The goal of this project is, therefore, to achieve technology for a form of rapid legged locomotion as well as to obtain our further understanding of locomotion mechanisms in biological systems.

Schmaroo

- by Alex Schmitz

Schmaroo is a Kangaroo robot which can jump a few cm. The robot has a camera and a long leg to generate vertical force to jump. The name is derived from the developer Schmitz + Kangaroo.

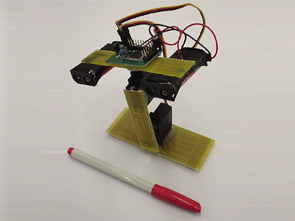

Coffee

- by Daisuke Katagami

Coffee was developed to investigate human-robot interaction. This robot has two actuators which enable the head of the robot to move around for several ways. Through the experiment with this robot, we learned even simple nod movement of a head can classify into many types.

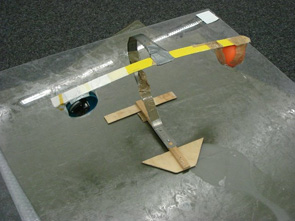

Patterfly

- by Koji Shibuya

In this project, we are trying to develop a robot capable of hovering by beating its wings. Making a robot, we focused on concepts of "cheap design" and "morphological computation," and we took advantage of "material property," which are proposed in the field of artificial intelligence recently. Based on the concepts, we designed a robot that had one D.C. motor and a crank mechanism for beating wings. The robot's wings beat in the horizontal plane, and were made by soft materials, such as polyurethane, cardboard, and plastic to increase air flow to downward. We observed videos of flapping wings and measured lifts in every materials and sizes of wings. From the results, we concluded that materials and sizes of wings should be chosen carefully according to flapping frequencies, weight of a robot, and so on.

Puppy I,II,III

- by Fumiya Iida

Most of the projects related to locomotion were launched by Fumiya Iida. This project shows that having an adequate morphology enables the dynamic system to achieve stable locomotion with simple controller (brain).

Bendy Robo

- by Kojiro Matsushita and Hiroshi Yokoi

In this project, aiming at aquisition of design scheme of Pseudo-Passive Dynamic Walker, we have been developping the robot to model the inferior limb both from systematic perspective and controlling perspective.

Fork Leg Robot

- by Kojiro Matsushita and Hiroshi Yokoi

The relation between morphology and material property of a biped robot is worth to attack in the current state of the art of the field. Considering the affinity of these two aspects, we designed the robot Fork Leg Robot.

Monkey Robot

- by Dominic Frutiger, Fumiya Iida, and Josh Bongard

How does monkey achieve jumping and climbing trees with such a heavy body? In this project, we developed monkey robot to reveal the secret mechanism of monkey by investigating especially the intrinsic oscillation of the body.

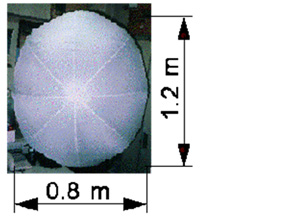

Melissa

- by Fumiya Iida

Melissa is developed as a robotic platform for the Flying Robot Project which is a part of the Biorobotics research at AILab, Dept. of Information Technology, Univ. of Zurich. The robot Melissa is a blimp-like flying robot, consisting of a helium balloon, a gondola hosting the onboard electronics, and an offboard host computer. The balloon is 2.3m long and has a lift capacity of approximately 400g. Inside the gondola, there are 3 motors for rotation, elevation and thrust control, a four-channel radio transmitter, a miniature panoramic vision system, and the batteries.

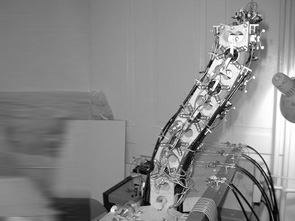

The Dextrolator I

- by Raja Dravid

In its most complex configuration the Dextrolator is composed of seven segments, actuated by seven motors. It receives sensor feedback from 126 sensors. The primary tasks the manipulator must perform is to move through a tube without touching the walls, to find its way to a specific point in space and finally to navigate through an environment to a certain point while performing obstacle avoidance.

EyeBot

- by Lukas Lichtensteiger

A robot that is able to position its sensors autonomously using electrical motors. The task of the robot is to employ motion parallax to estimate a critical distance to obstacles. This task is achieved by adapting the morphology of the compound eye by an evolutionary algorithm while using a fixed neural network to control the robot. Each of the 16 long tubes contains a light sensor which can detect light within an angle of about 2 degrees. The tubes can be rotated about a common vertical axis.

T-Bot

- by Lukas Lichtensteiger

This robot is one example of a series of robots rapidly built from a children's construction kit using our Flexible Robot Building Kit. We used an artificial evolutionary system to evolve simulated agents that can complete some specific task. Particular attention was devoted to the role of the morphology of these robots with regard to their fitness in a specific environment. These simulated agents (left) were then used as blueprints to build real world robots (right). Finally, the robots were tested in a real world environment to evaluate their fitness

ROBOT BABE

- by Max Lungarella

Our experimental setup consists of: (a) an industrial robot manipulator with six degrees of freedom (DOF), (b) a color stereo active vision system, and (c) a set of tactile sensors placed on the robotfs gripper. This robot has been used for experiments related with the field of developmental robotics.

samurai I,II

- by Hiroshi Kobayashi

Produced by Neuronics, Inc.

The Samurai robot was designed by Hiroshi Kobayashi and is being built by Neuronics, Inc., a spin-off company of the AILab. It will be used by undergraduate students in classes and tutorials in New Artificial Intelligence, but also for research purposes. The Samurai is equipped with: An array of 12 infrared proximity sensors, 8 Bumper sensors, An omnidirectional color-camera, Differential steering with two 15 Watt DC motors, Motorola 68336 main processor.

Sahabot 2

- by Dimitrios Lambrinos and Ralf Moller, in cooperation

with Rosys AG

Sahabot 2 was built by Dimitrios Lambrinos and Ralf Moller, in cooperation with Rosys AG, Hiroshi Kobayashi and Marinus Maris. As its predecessor, Sahabot, it was built for a specific experiment involving the navigation behavior of the desert ant cataglyphis, and is being run in the Tunesian part of the Sahara desert in August 1997 in the same area where ethologists collected data on the real cataglyphis.

Sahabot 1

- by Dimitrios Lambrinos, Hiroshi Kobayashi, and Marinus

Maris

Sahabot was built by Dimitrios Lambrinos, Hiroshi Kobayashi, and Marinus Maris. It was built for a specific experiment involving the navigation behavior of the desert ant cataglyphis. It was run in the Tunesian part of the Sahara desert in july 1996 in the same area where ethologists collected data on the real cataglyphis.

Honey

- by Hiroshi Kobayashi with some assistance from Rene

Schaad

Honey is a flying autonomous robot. It is an indoor blimp controlled by an off-board PC. It sports various sensors including a camera and four propellers for motion control. It was mainly developed by Hiroshi Kobayashi with some assistance from Rene Schaad. Honey was mainly built for use in navigation experiments and for experiments involving human-robot interaction.

Yoko

- by Hiroshi Yokoi?

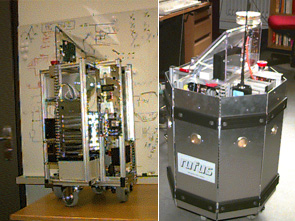

Mrs.Gloria Teasdale I,II

- by Rene Schaad

Gloria is a modified Didabot. It improves on a Didabot by providing improved battery life (for now up to 1.5 hours), a protective cover, bump sensors, and a real-time clock. The modifications were made necessary because Gloria is serving as a buddy to Rufus, which operates in an unmodified office environment for extended periods of time.

Analog robot

- by Ralf Moeller

The Analog robot performs visual homing in purely analog hardware. The hardware is based on the "Average Landmark Vector" model. For a description, see our paper "Landmark Navigation without Snapshots: the Average Landmark Vector Model" which is available on Ralf Moeller's home page.

Morpho I

- by Marinus Maris

The control architecture of the autonomous robot Morpho I, which was built by Marinus Maris, is based on a neuromorphic design. Basically, there is a complete sensory-motor chip for robot control that takes care of all sensing (23 pixels contrast retina array), edge position detection (winner-take-all with position encoding), decision making (attention bias) and motor steering (a spike generator that delivers pulses for a servo). Its task is to follow one out of two possible lines. Which line is followed is controlled from outside of the chip adjusting the attention of the robot.

Sita

- by Marinus Maris

Sita was built by Marinus Maris. Sita is built on a model car base, like its brother "Famez" (below). It is equipped with a 1D camera (64 pixels), 16 IR and ambient light sensors, bumpers, and a speech generator. The task of the robot is to run for errands whenever asked. The speech generator (hopefully soon augmented with speech understanding possibilities) will enable the robot to verbally interact with humans.

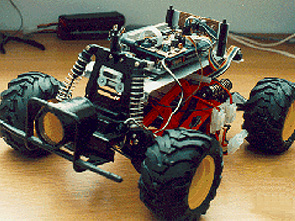

Didabot

- by Marinus Maris with system software by Rene Schaad and

Daniel Regenass

Ten educational robots were built by Marinus Maris with system software by Rene Schaad and Daniel Regenass for use in student education in the context of Prof. Pfeifers class "New AI". It features: Based on R/C car (Tyco Scorcher), Very fast Differential 4WD (4 propulsed out of 6), Intel 16-bit 196KD microcontrollers (20 MHz), IR, and ambient light sensors, Programmable in C and assembler.

Rufus T. Firefly I, II

- by Rene Schaad

Rufus T. Firefly was built by Rene Schaad. It is a multipurpose extensible platform for autonomous agents research.

Famez

- by Marinus Maris

Famez is a fast robot relying entirely on only one sensor (one ultrasonic range finder). Three of them were built at our laboratory by Marinus Maris based on model car kits. Its top speed is ~10 mph. It features Motorola MC68331 and HC11 microcontrollers.

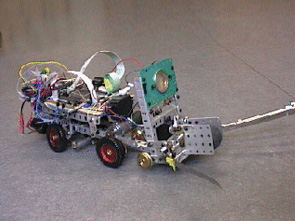

Junkyard Warrier ("Junkie")

- by Rene Schaad

This robot was built by Rene Schaad from "Stokys" metal construction parts. It features: Car-like steering, 20Mhz Intel 196KD microcontroller, Sonar, 2 antennae, buzzer, Gripper.

Cyclope

- developed by the Laboratoire de microinformatique at the

Swiss Federal Institute of Technology in Lausanne

Cyclope was developed at the Laboratoire de microinformatique at the Swiss Federal Institute of Technology in Lausanne, Switzerland. We own one exemplar for evaluation purposes. Features include: Circular shape, 12.5 cm diameter (5"), HC11 microcontroller, 64 element linear CCD array, bumpers, debugging board, IR remote control, graphic LCD etc.

Khepera series (gripper, camera, Khepera I, Khepera II)

- developed by the Laboratoire de microinformatique at the

Swiss Federal Institute of Technology in Lausanne

Produced by K-team

Khepera was engineered at the Laboratoire de microinformatique at the Swiss Federal Institute of Technology in Lausanne, Switzerland. The AI Lab currently owns 15 Kheperas. Features includes: Circular shape, 5.5 cm diameter (2.2"), The small size enables desktop experimenting, 2 DC motors for differential steering, 20 min. autonomy, or power-by-wire, Motorola MC68332 microcontroller, Miniature gripper forthcoming.