iCub.org

Videos

Loading the player ...

- Offer Profile

- The iCub is the humanoid

robot developed at the Istituto Italiano di

Tecnologia as part of the EU project RobotCub and subsequently

adopted by more than 20 laboratories worldwide.

Run by the Robotics, Brain and Cognitive Sciences dept. at the Istituto Italiano di Tecnologia

Funded by the EU Commision under the Cognitive Systems and Robotics program

Product Portfolio

iCub

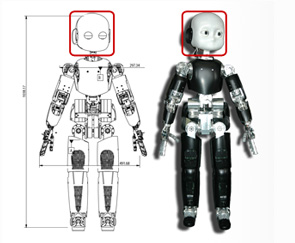

- The iCub is the humanoid robot developed at the Istituto Italiano di

Tecnologia as

part of the EU project RobotCub and subsequently adopted by more than 20

laboratories worldwide. It has 53 motors that move the head, arms & hands,

waist, and legs. It can see and hear, it has the sense of proprioception

(body configuration) and movement (using accelerometers and gyroscopes). We

are working to improve on this in order to give the iCub the sense of touch

and to grade how much force it exerts on the environment.

Scientists at the Cognitive Humanoids Laboratory work at the forefront of the robotics and neuroscience research implementing models of cognition in robots of humanoid shape. This heterogeneous group of people aims at understanding brain functions and realizing robot controllers that can learn and adapt from their mistakes.

Activities encompass the construction of the hardware, that we call "bodyware", and software which will make, one day, machines of intelligence comparable to humans. We call this technology "mindware". On the bodyware side we developed the iCub, a humanoid robot, shaped as a 4 years old child. Simultaneously, we are addressing the development of the technologies for the next generation of robots based on soft and adaptable materials both for sensing and processing. On the mindware side, the laboratory is involved in the realization of the cognitive skills of the humanoid robot; that is, providing the robot with visual, auditory and tactile perception and the ability to gaze, reach and manipulate objects.

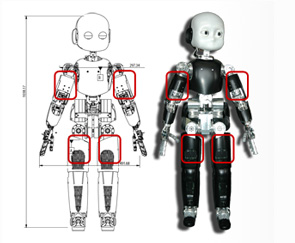

iCub 6-axial force/torque sensor

- iCub mounts four 6-axial force/torque sensors in the upper arms and legs. They have been designed to be compatible (in size) with the ATI Mini-45 sensors. The electronics have been miniaturized to fit inside the sensor which provides digital (CAN) output directly.

CFW-2, 10 port CAN bus PC104 card

- This is a multi-port PC104+ card that hosts 10 CAN bus ports (managed by two microcontrollers), two Firewire ports and audio pre-amplifier. A large buffer (2Mbyte) is available for storing CAN messages and a DMA interface on the PCI bus is also provided.

iCUb controllers

- The iCub controllers are small microcontroller boards based on the Freescale 56F807 chip. Each card can connect via CAN bus to the host CPU (the PC104 card). They are of two different type controlling 4 brushed-dc motors (0.5A each) or 2 brushless-dc motors (48V, 6A continuous, 20A peak). The brushed version is complemented by a small power supply; the brushless version is made of two parts (one for the logic and the other for the amplifier).

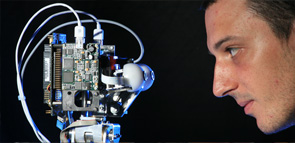

iCub head 1.1

- The iCub head has 6 degrees of freedom and sports two cameras (Dragonfly2), two microphones (with special pinnae), gyros and accelerometers (Mtx). It also mounts a PC104 dual core machine with enough ports to control the entire robot, read data and send them out via a Gbit/s Ethernet port.

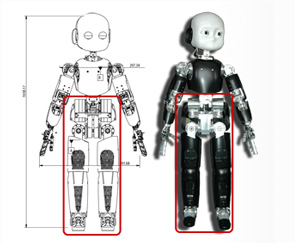

iCub arm and hand

- The iCub arm and hand are anthropomorphic jointly sporting 16 degrees of freedom. 7 for the arm (including the wrist) and 9 in the hand. 8 degrees of freedom are allocated to the thumb, index and middle fingers thus enabling a fairly large degree of dexterity. The hand can be integrated with 108 tactile sensors in the fingertips and palm.

iCub legs and torso

- The iCub legs and torso are jointly 15 degrees of freedom. They were designed mainly for crawling on all fours but tests have shown that bipedal walking is possible. Force/torque sensors are mounted in the upper part of each leg.

- New life is being injected into the iCub platform through the development of force control. This new skill enables safe and gentle interaction of the robot with human teachers. A short video shows this new feature used by an experimenter to teach simple actions to a brand new iCub.

- iCub grasping skills have been recently improved as a result of the Poeticon EU project. Simple actions such as grasping are combined through the use of spoken language tools developed by the Poeticon teams. In this example, the iCub pours cereals in a cup.

- New visual skills of the iCub. Independent motion tracking for the iCub using optical flow. See how we can easily track objects or people that move independently of the iCub.

- The iCub met the Italian President in 2010 during a visit to Genoa and IIT. Here the President is asking about the robot after receiving the latest IIT leaflet from the hand of the iCub. Also in the picture, Giorgio Metta, Giulio Sandini and Roberto Cingolani.

- The iCub was in Hannover in April 2010 as part of the Italian delegation to the international fair and exhibit. Here's interacting with the German Chancellor Angela Merkel while showing its movement coordination skills and software reliability. The iCub ran uninterrupted for three full days attracting thousands of curious visitors

- Lorenzo, the guy in the picture, is checking the new iCub before running a manipulation experiment. The iCub is being fitted with a capacitive skin system in the fingertips and palms that enables measurement of contact and the implementation of safe grasping strategies.

iCub skin and fingertips

- The iCub can mount a capacitive skin system in two forms:

fingertips and generic body skin.

The skin is based on a modular triangular structure and with minimal external wiring.

Each taxel can be sampled at 50Hz (8bits) and connected via CAN bus to a CFW-PC104 card.

iKart

- This is a holonomic mobile base for the iCub which mounts six Swedish wheels, a high perf i7-CPU, it sports wireless connection and high perf Li-ion batteries. The iCub can stand on top of it and control the base using a standard interface.

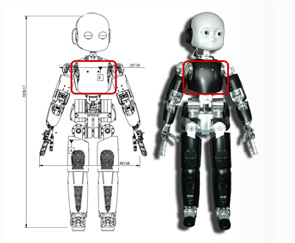

iCub 2.0

- iCub 2.0 is our experimental (work in progress) new iCub. The iCub has been upgraded with respect to the torque measurement (joint level), tension sensors (for tendons), full body skin, fingertips, new head, additional high-resolution encoder on all brushless motors, many small improvements in the mechanics and wiring and new foot design (for bipedal locomotion)

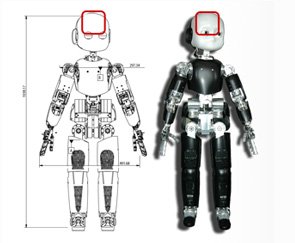

iCub head 2.0

- The new head (tentatively called 2.0) is at an advanced stage of design and prototypation. ´The neck has been redesigned for more torque and the eyes will mount zero-backlash harmonic drive gears. Further small modifications have been implemented especially to support accurate camera calibration.

Projects

RobotCub

- The goal of RobotCub was to study cognition through the implementation of a humanoid robot the size of a 3.5 year old child: the iCub. This is a fully open source & hardware project, one of a kind! This is the project that started the iCub both in hardware and software.

Xperience

- Xperience will demonstrate that state-of-the-art enactive systems can be significantly extended by using structural bootstrapping to generate new knowledge. This process is founded on explorative knowledge acquisition, and subsequently validated through experience-based generalization.

EFAA

- The Experimental Functional Android Assistant (EFAA) project will contribute to the development of socially intelligent humanoids by advancing the state of the art in both single human-like social capabilities and in their integration in a consistent architecture.

MACSi

- The MACSi project is a developmental robotics project based on the iCub humanoid robot. It is funded as an ANR Blanc project from 2010 to 2012.

Darwin

- The Darwin project aims to develop an "acting, learning and reasoning" assembler robot that will ultimately be capable of assembling and disassembling complex objects from its constituent parts

ITALK

- The ITALK project aims to develop artificial embodied agents able to acquire complex behavioural, cognitive, and linguistic skills through individual and social learning. This will be achieved through experiments with the iCub humanoid robot.

Poeticon

- POETICON is a project that explores the poetics of everyday life, i.e. the synthesis of sensorimotor representations and natural language in everyday human interaction. This is related to an old problem in AI on how meaning emerges.

CHRIS

- The CHRIS project (Cooperative Human Robot Interaction Systems) addresses the fundamental issues which enable safe Human Robot Interaction (HRI) from the motoric as well as cognitive point of view.

RobotDoc

- The RobotDoC Collegium is a multi-national doctoral training network for the interdisciplinary training on developmental cognitive robotics. The RobotDoc Fellows will acquire hands-on experience through experiments with the open-source humanoid robot iCub.

Roboskin

- RoboSKIN will develop and demonstrate a range of new robot capabilities based on the tactile feedback provided by a robotic skin from large areas of the robot body. Up to now, a principled investigation of these topics has been limited by the lack of tactile sensing technologies.

Amarsi

- Motor skills of humans and animals are still utterly astonishing when compared to robots. AMARSi aims at a qualitative jump in robotic motor skills towards biological richness.

IM-CLeVeR

- IM-CLeVeR aims to develop a new methodology for designing robots controllers that can cumulatively learn new efficient skills through autonomous development based on intrinsic motivations, and reuse such skills for accomplishing multiple, complex, and externally-assigned tasks.

eMorph

- The goal of the eMorph project is to design asynchronous vision sensors with non-uniform morphology, using analog VLSI neuromorphic circuits, and to develop a supporting data-driven asynchronous computational paradigm for machine-vision.

ROSSI

- Starting from the assumption that cognition is embodied, the ROSSI project addresses the question of how the possibility of communication between agents (e.g. humans and robots) is affected by differences in sensorimotor capacities.